The Forgotten Crisis

This essay is part of Thinking in Public, a series exploring the uncertain, exponential moment we’re living through. Each piece looks through a systems lens at how AI, politics, and capitalism are reshaping one another — and, ultimately, society itself. Writing these essays is my way of making sense of what’s happening — to process out loud, to self-soothe, and to share in case it helps others do the same. Read the series preface here.

The Moment We Almost Had

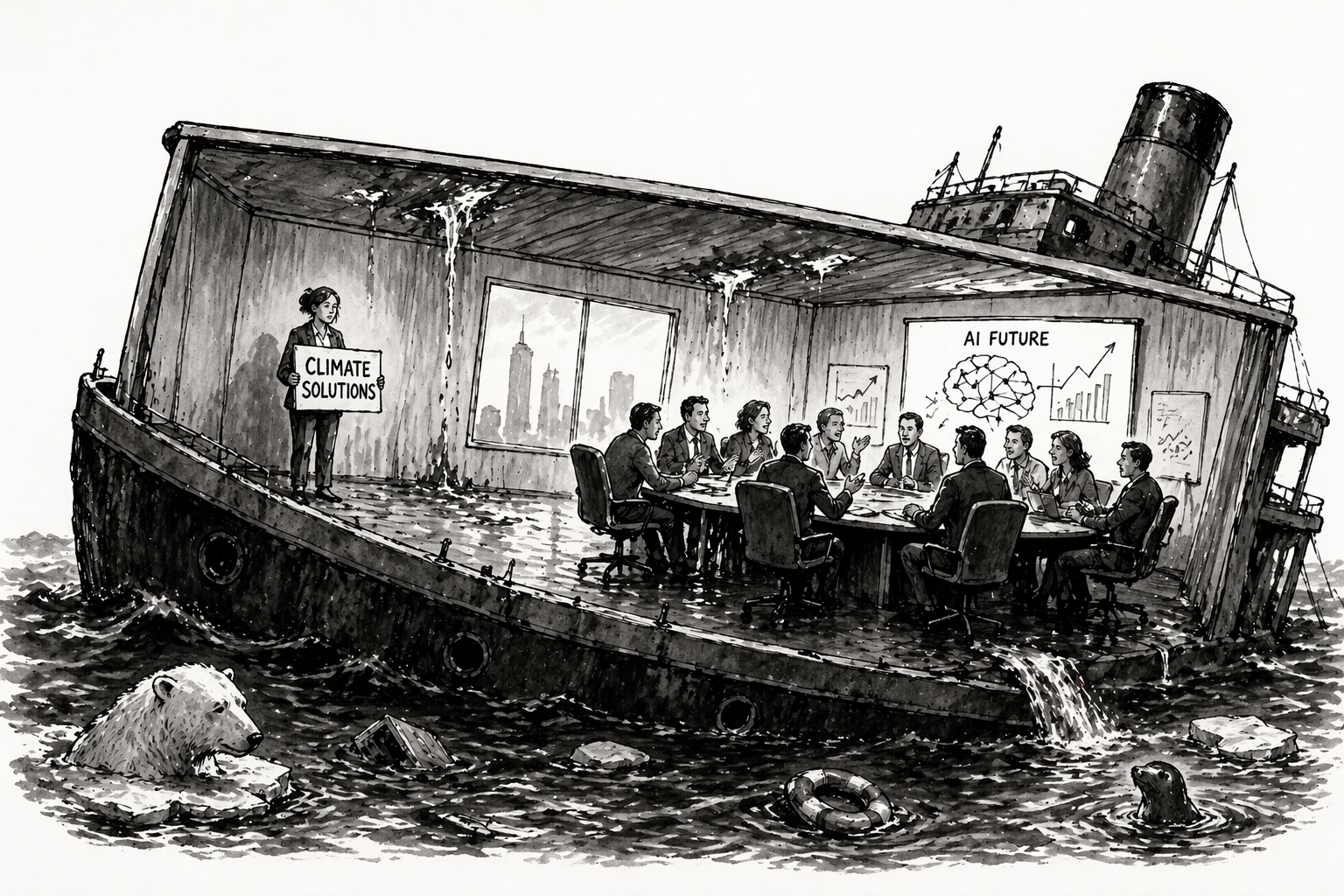

Just as the climate crisis finally felt like it had real momentum, something else took the spotlight.

For a brief moment, it seemed like the world was actually mobilizing around climate. The U.S. passed the Inflation Reduction Act — committing roughly $370 billion to climate and energy. Globally, climate tech investment surged, with venture funding reaching on the order of $70–80 billion annually in the early 2020s.

For a few years, climate became one of the largest coordinated deployments of capital in modern history. It wasn’t enough. But it was real progress — a breakthrough in aligning policy, capital, and industrial strategy around decarbonization for the first time in U.S. history.

The One-Two Punch

Then came a one-two punch.

First, a political shift that began to unwind that momentum — pulling back funding, weakening commitments, and reintroducing uncertainty. At the same time, the rapid rise of AI pulled capital, talent, and societal attention into a new, more immediate frontier.

The center of gravity moved.

This shift isn’t irrational. AI may be the most disruptive technological force we’ve ever encountered — the emergence of a new kind of intelligence already reshaping labor markets, capital flows, and the structure of the economy itself.

But it raises a question: what happens when one existential crisis eclipses another?

The Privilege of Stability

Climate action has always depended on something deeper than technology.

It assumes we live in a world capable of long-term thinking — one with institutions stable enough, markets patient enough, and societies coordinated enough to sustain effort over decades.

We were never particularly good at that. But for a moment, it felt like we might be making progress. The combination of policy, capital, and industrial alignment suggested that large-scale coordination was possible.

That moment now feels fragile.

Political systems are fragmenting. Media ecosystems are splintering. Trust is eroding, and time horizons are collapsing. The same forces reshaping labor and power through AI are also destabilizing the institutions required to address long-term problems. (This is the core argument of my post on The Last Social Contract.)

Climate was never just a technical challenge. It was a coordination challenge layered on top of a system that, at least temporarily, could hold together.

That assumption is no longer safe.

The New Gravity Well

AI isn’t just another issue competing for attention. It has become a gravity well — pulling in capital, talent, policy focus, and strategic urgency.

And in many ways, it should.

AI is a profoundly disruptive technology — a once-in-a-generation, perhaps once-in-a-civilization shift with the potential to reshape nearly every system we rely on.

Nations see it as a competitive race. Companies see it as an existential opportunity. Individuals are already feeling its effects on their work and livelihoods.

Compared to that, climate feels slower and less immediate. Its risks are distributed, its timelines stretched, its feedback loops harder to perceive in real time.

So attention shifts — not because climate is solved, but because AI feels urgent.

And while we focus on building intelligence, the atmosphere continues to accumulate carbon.

The Crisis Beneath the Crisis

It’s tempting to see climate and AI risk as separate problems.

They’re not.

They are all expressions of the same underlying dynamic: our inability to coordinate at the civilizational scale these systems require.

Climate change is a tragedy of the commons — a shared atmosphere gradually degraded by individually rational actions.

AI is rapidly becoming something similar: a competitive race between companies and countries where slowing down feels like losing, even as the pace of progress risks destabilizing labor markets, economic systems, and the balance of power itself. And beyond those near-term disruptions lies a deeper, more existential risk: that we may ultimately create forms of intelligence we are unable to control.

Each actor pushes forward because it makes sense to do so. But collectively, we risk building systems faster than we can govern them.

Different domains, same structure: individual incentives producing collective failure.

The Hard Truth

This isn’t about bad actors (though they certainly exist). It’s about systems that reward short-term advantage even when it produces long-term instability.

Which leads to an uncomfortable conclusion: these are not problems we can solve independently.

They require coordination — systems that align incentives across actors at scale. Not necessarily larger governments, but more effective forms of governance, whether formal or emergent, that can manage shared risks and shared resources.

Without that, intelligence accelerates the problem. With it, it becomes part of the solution.

The Unexpected Opportunity

This is where the story shifts.

AI may not just be distracting us from climate. It may be forcing us to confront the deeper coordination problem that climate has always represented.

Because governing AI is quickly becoming unavoidable. Questions around safety, alignment, and global competition are already pushing toward some form of coordination — however imperfect.

If we can learn to coordinate in that domain — to align incentives across powerful actors in a fast-moving, high-stakes system — we may be building the infrastructure we’ve always needed.

The same infrastructure required to address climate.

Where This Leaves Us

I still work in climate tech. But I’m starting to see it differently — not just as an environmental or entrepreneurial challenge, but as a test of whether humanity can coordinate effectively as a global civilization.

Because that’s the real bottleneck. Not technology. Not capital. Not awareness.

Coordination and collaboration.

Maybe the real story isn’t that we forgot the climate crisis. Maybe it’s that we finally ran into the deeper problem it was always pointing to.

This is my hope for the AI crisis we now find ourselves in: that it pushes us to come together, as a single human civilization, to act in our collective interest — before it’s too late.